Duration of Experiment

What is the duration of the experiment? Is it long time? How much long before participants gives any feedback? This our finalize subject of the experiment. We will also be talking about exposure. How much users you want them to see your experimental features, will affect the duration of your experiment.

Duration vs Exposure¶

When talking about the duration of the experiment, you need to decide when you run your experiment, is it by weekend, weekday, school season, or holiday. Because this particular traffic is different for each day, and eventually affect your traffic. The second is what proportion of traffic that you want to send to your experiment. And the third thing is the duration of your experiment.

Let's say that you want a million samples but only have a hundred thousand visitors on any given day, that means you want to have 10 day experiment. But there's couple of reasons why we should not just run the whole population. And that is safety. You don't want to your experimental feature exposed because you're not selecting participants properly, and it could be leaked through news or blogging. And experimental features may not satisfy all users.

There's also benefit of choosing smaller proportion, like for example active users. These are the users that could be experimented throughout the year (holiday, weekday, weekend). For example, if you have range of feature parameter in your modelling, you can test smaller proportion at the same time, and when the results varies throughout season, you can see comparable results in order.

If you're already a data scientist working in a company, you should know that traffic is much higher in weekday than those in the weekend. Suppose you want to run an experiment in 1 million pageviews but only receive 500.000 pageviews. Then that case, you run your experiment in two days. But since weekday and weekend have variation, you should mixed it and run for at least 3 days. It might take longer that considering for safety reasons as stated, you want to run it at less traffic.

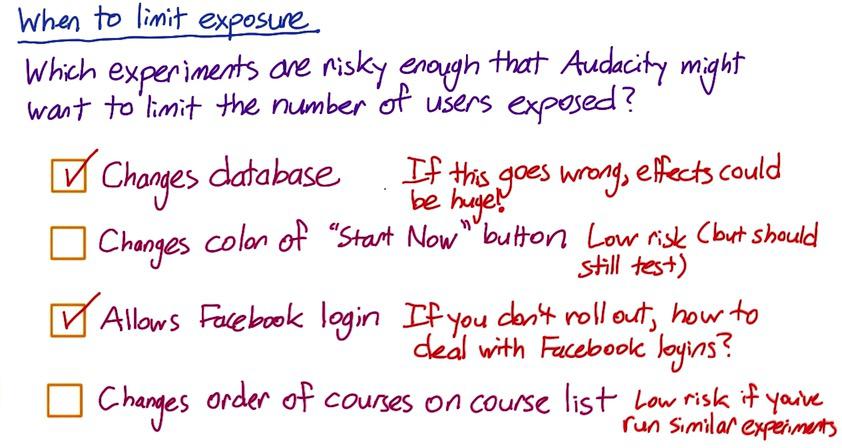

Screenshot taken from Udacity, A/B Testing, When to Limit Exposure

These examples shows 2 aforementioned safety reasons. Changes database might not risky at all, but if something goes wrong, the site can goes down, and it will disatisfy all users, so you might want to limit users. Change color of a button won't be likely huge leak that some news article or blog will write about. Allow Facebook login can be risky, as changes the databases. Change the rank order of a course won't be likely detected by the users.

Learning Effects¶

If you apply some changes in your A/B experiments, users may spend some time to adapt to your changes. This is called learning effects. Learning effects could be lead to two things, version changes, where users surprised in negative perspective, like for example "Oh crap! Why all of this change???" or novelty changes, "Look at that new things, excited to try these out!". This won't be good for all users, and sometimes during experiments, they change sides, so for safety reasons you want to limit your users. The other reasons is this learning effects, if you apply to worldwide users will take usually long time, which is not the luxury that you have.

Learning effects should be watched out in variety of reasons. First is unit of diversion, if you want to see some stateful across experiments, we might want to use user-id or a cookie. The second is about how long and how often users see the changes. So this could be used as a cohort instead of population. The other things is safety reason, spesifically about risk. As you make bigger changes, it potentially lead to bigger risk, and again it might be harder for users to adapt, so again you want to limit your users.

There's two other things that we could do in related to learning effects, pre-period and post-period. In pre-period you run kind of retrospective analysis with A/A tests. You want to see variability, identify lurking variables before you run your experiments. It's useful to know difference of learning effect before you run your experiment. Post-period is another A/A tests after you run your experiment. If you detect some difference, you can know for sure it caused by learning effect difference in experiment period. You want to see changes to users after you run your experiment. This is often pretty advanced, and may cautioned by A/B testing beginner.

Summary¶

So with previous blogs, we have learned about how to design A/B testing experiments. The advice is you have to try it out. Yes, you have learned about subject, population, size, and duration. Keep in mind of that material to begin your experiment. Sometimes analytical calculation doesn't serve much in reality, but it serve as intuition.

The other things to keep in mind is choosing your metric properly with unit of diversion. The variability could be huge, and even impossible to compute the metric. You also want to do iterative processing, see which suitable for metric, the results satisfy you, or in the business metric. Sometimes you get the best results in the previous experiments. These iterative process will eventually building out intuituion, you're able to make guesses that could speedup the process. We also have talked about invariance metric, which is sanity checking. If you choose cookie as your subject, and population in English. You could do sanity checking to count number of English cookie both in experimental and control, if they're roughly equal, then you have run your experiment properly. And finally watchout for surprises. There's one experiment that someone tried out they figured only affect 5% of the time, turns out in the first day it affect 80%. This could be potentially good, or bad. You have to investigate what's went wrong.

Disclaimer:

This blog is originally created as an online personal notebook, and the materials are not mine. Take the material as is. If you like this blog and or you think any of the material is a bit misleading and want to learn more, please visit the original sources at reference link below.

References:

- Diane Tang and Carrie Grimes. Udacity